QA for a Software Development AI Assistant

Transforming an unstable AI solution into a developer-ready product focused on accuracy, adaptability, and usability for real development workflows, achieving 52% more accurate responses and a 29% increase in user satisfaction.

About project

Solution

Exploratory testing, Functional testing, Usability testing, Localization testing, API testing, Automation testing, Regression testing

Technologies

ChatGPT, Gemini, JMeter, PyCharm, Selenium, Postman

Country

United States

Industry

Client

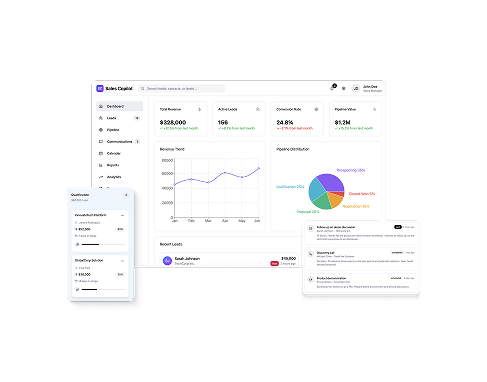

Our client for this project is a global provider of data visualization tools used by development teams to build interactive charts and dashboards for web applications. Their new public AI assistant is designed to help developers generate code examples, configure visual components, and navigate technical documentation more efficiently. The company wanted to evaluate real-world usability and execution quality before unveiling the product to a wider audience.

Project overview

Take advantage of our QA expertise and release with confidence.

Before

- Generic AI responses

- Reactive assistance only

- Inconsistent AI outputs

- Single-level explanations

After

- Context-aware answers

- Proactive suggestions

- Stable responses

- Adaptive answer depth

Project Duration

8 weeks

Team Composition

1 QA Lead, 1 Manual QA, 1 Automation QA

Challenge

The AI assistant was publicly available and already supported basic documentation queries and code generation. However, its behavior remained largely reactive and uniform, offering the same depth of answers regardless of developer experience, framework, or task complexity. This limited its value as a true productivity enhancer rather than just a searchable knowledge base.

At the same time, after preliminary competitor research, we saw that the market segment for developer-facing AI tools was rapidly moving towards proactive guidance, contextual awareness, and personalized experiences. To stay relevant and scalable, the assistant needed to move beyond baseline correctness and demonstrate adaptive intelligence, consistent output quality, and proven real-world usability.

Key challenges

- Trust-building limitations. Lack of explainability reduced confidence, especially for advanced who needed deeper recommendations.

- Low adaptability by skill level. Beginners and advanced users received the same response depth.

- Reactive-only behavior. The assistant did not suggest optimizations or alternatives proactively.

- Weak framework awareness. Limited differentiation between React, Angular, and vanilla JS scenarios.

- Inconsistent response stability. Identical prompts produced variations in structure and depth.

- No persistent memory. User preferences and dialog context were not retained until the next session.

- English-only interaction. Localization gaps restricted global accessibility, as interactions in other languages were not supported.

Solutions

To address the identified gaps, the QA team designed and executed a structured AI-focused testing strategy centered on real developer behavior, prompt-driven interaction flows, and framework-specific usage patterns. The work combined exploratory AI evaluation, persona-based testing, automation, and competitive analysis to validate how the assistant performs in practical development scenarios.

Our approach focused on strengthening response accuracy, contextual behavior, adaptability, and consistency, while also validating backend behavior, UI stability, and automation coverage for repeatable quality control at the public interface level.

What we did

- Delivered structured technical documentation and a prioritized improvement roadmap based on testing results.

- Built real-world AI test scenarios covering core developer workflows and documentation use cases.

- Executed persona-based testing for beginner and advanced developer profiles.

- Validated framework-specific behavior across React, Angular, and vanilla JavaScript use cases.

- Performed AI response accuracy and consistency testing using repeated prompt execution.

- Conducted proactivity and context-awareness testing to assess missed optimization opportunities.

- Verified backend API behavior and edge cases through structured API testing.

- Automated critical AI interaction flows for stable regression coverage.

Technologies

The choice of tools and technologies matters as much for the success of the project as the expertise of QA engineers. We pick only the most relevant, proven tools to support our comprehensive testing strategy.

- ChatGPT

- Gemini

- JMeter

- PyCharm

- Selenium

- Postman

Types of testing

Exploratory testing

Checking AI behavior by using scenarios that simulate real developer workflows.

Results

In 6 weeks, we delivered a clear picture backed by data of how the AI assistant performs in real developer workflows and where its behavior directly affects adoption, trust, and productivity. By running the same scenarios many times, testing with different user profiles, and supporting the checks with automation, we set clear quality guidelines for accuracy, consistency, adaptability, and framework-specific behavior.

After applying the testing strategy and refining key interaction flows, the assistant showed clear improvements in response stability, relevance, and overall workflow efficiency. It is now being adopted by more developers, receiving mostly positive feedback.

2.3x

more consistent answers

38%

increase in response relevance

52%

fewer misleading replies

29%

increase in user satisfaction

Ready to enhance your product’s stability and performance?

Schedule a call with our Head of Testing Department!

Bruce Mason

Delivery Director